Artificial intelligence has moved from novelty to a working tool that startups can adopt without a huge upfront commitment. Small teams can get a jump on feature builds and testing cycles by pairing human judgment with machine assistance.

The result often feels like faster learning loops and better use of scarce developer time. For a founder trying to stretch runway and ship something users want, that combination can change the game.

1. Faster Development Cycles

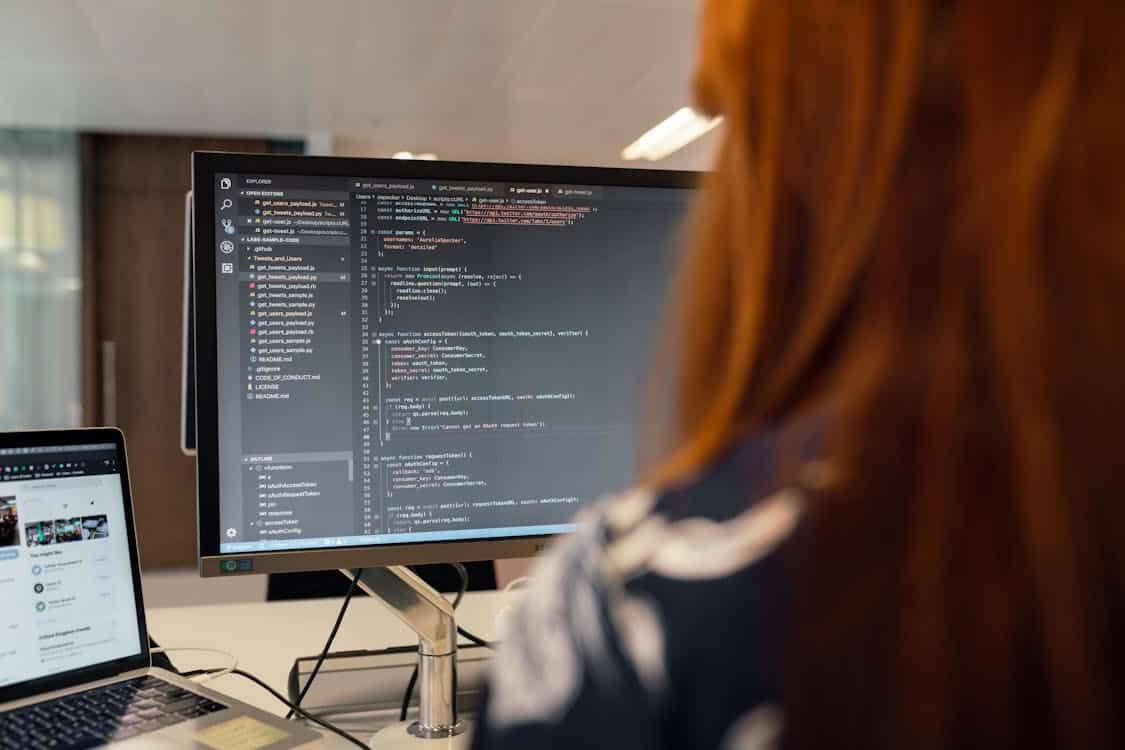

AI systems help speed routine tasks such as scaffolding, code completion, and template generation so developers can focus on harder problems that require human judgment. Models trained on code patterns offer suggestions that cut down repetitive typing and reduce time spent on boilerplate work.

When a team wants to move from idea to prototype quickly, these tools help get the ball rolling without sacrificing clarity. That accelerated pace lets product experiments reach users sooner and creates more chances to learn.

Continuous integration and delivery pipelines also gain from automated checks that flag regressions and suggest fixes before a human review is needed. Test generation and static analysis can run in tandem to provide rapid feedback, trimming the time between a code change and a stable build.

Startups that adopt these habits often find they can iterate on features multiple times in the same window where they previously managed a single release. That rhythm turns into a practical advantage when market signals change and rapid tweaks are required.

2. Cost Efficiency and Resource Management

AI driven tooling reduces the need to expand headcount for tasks that can be partially automated, which is a major win when budget matters most. Automated tests, code review suggestions, and auto scaling rules cut operational overhead and allow small teams to cover more ground.

The financial gains show up as lower cloud bills and fewer cycles spent on repetitive chores, which stretches the runway. In plain terms, a lean team can accomplish what used to require many more hands.

Outsourcing some routine flows to automation also frees skilled engineers to tackle product shaping and customer problems that machines cannot handle well. That shift of labor turns scarce human time into higher value work and improves recruiting conversations since roles feel more impactful.

When founders are judicious about where to apply machine help, the company often reaches meaningful milestones with a smaller burn. It is a case of working smarter and letting clever tools do the heavy lifting where appropriate. Blitzy is a great example of such a tool that optimizes resource management without the need for big investments in infrastructure.

3. Improved Product Quality and Testing

Automated test generation and anomaly detection spot edge cases that are easy to miss in manual cycles, and that reduces regressions after releases. Models can propose scenarios derived from real usage data and surface rare combinations of inputs that traditional suites do not cover.

That type of coverage leads to more robust releases and fewer emergency patches late at night. Customers notice stability, and a reputation for reliability can be a competitive edge when trust matters.

Code assistants and predictive bug detectors also help catch patterns tied to common failures, shortening the defect detection loop. Peer review remains vital, but these helpers raise the baseline quality so human reviewers focus on design choices and system architecture.

When bugs are found earlier, the cost of fixing them drops significantly and developer morale improves. In practice, fewer late stage surprises mean teams sleep a little better and ship with more confidence.

4. Better Personalization and Faster Market Fit

Machine models can analyze user actions and tailor flows so individual users see content or features that match their needs, which improves engagement and retention. With a data driven approach to onboarding and recommendation, small teams can test different approaches quickly and see which ones move key metrics.

That rapid experimentation helps teams find product market fit by aligning features with what real users value. Small tweaks informed by behavior patterns can pay big dividends in conversion and loyalty.

Segmentation informed by automated clustering and simple predictive models helps teams prioritize which cohorts to serve first, saving time on broad based launches that miss the mark. Personalization does not need to be grand to work well; a few targeted changes in messaging or defaults can lift activation and retention in measurable ways.

By working through simple experiments and learning fast, teams avoid spending months building features that users do not want. That focused approach tends to beat broad guessing and brings a practical sense of direction.

5. Data Driven Decision Making and Forecasting

AI tools help teams read patterns from telemetry, making it easier to forecast usage trends, spot churn signals, and prepare for capacity needs. Simple models that predict user drop off or conversion probability give founders early warning and guide where to invest scarce development time.

When a team can see likely outcomes before they arrive, product priorities become clearer and roadmaps gain practical focus. That forward looking stance keeps teams from being surprised and helps plan next steps with more confidence.

Predictive analytics also aid pricing experiments, feature prioritization, and operational planning by turning raw logs into usable signals for decision discussions. Instead of debating endlessly, teams can test hypotheses against modeled expectations and adjust plans based on concrete evidence.

That habit of checking model outputs and updating views keeps strategy grounded in observed behavior rather than wishful thinking. In short, data informed choices reduce guesswork and let small teams punch above their weight.